Amazon lays off robotics positions, sends strategic signal: $200 billion fully bet on AI computing power, self-developed AI chips become the key to cost reduction

According to Zhitong Finance, Amazon (AMZN.US), the leading U.S. e-commerce and cloud computing giant, is making job cuts within its strategically important robotics division. Some Wall Street analysts believe that, coupled with Amazon's recent announcement to undertake large-scale development of its self-developed AI chips—the AI ASIC computing power clusters named Trainium and Inferentia to develop and iterate its own artificial intelligence large models—this move jointly signals that this e-commerce and cloud computing giant is advancing broader cost-cutting measures while shifting its spending focus towards AI compute infrastructure. At the same time, Amazon is increasingly relying on its automation system to support its fulfillment network.

According to reports cited by media from informed sources, this week’s layoffs impacted “some robotics positions,” but the company is still actively recruiting and investing in “several strategic areas.”

These latest layoffs—bringing the total number of corporate jobs eliminated by Amazon since 2022 to 57,000—come as Amazon is ramping up massive investments in artificial intelligence and data centers as well as humanoid robots in order to maintain its crucial status in the AI race and the physical AI megatrend.

Amazon Starts AI Cost Revolution! Striving for Training and Inference Autonomy

This move by Amazon does not indicate it is ignoring its robotics business and projects, but instead shifting resources away from robotics projects/positions with longer return cycles, in favor of AWS cloud resources, AI data centers, and its self-developed AI ASIC chips. Amazon aims for “collaborative design of models and chips” so as to gain control over its training and inference cost structure, rather than being tied to the long-term external GPU pricing scheme.

Undoubtedly, as Anthropic, known as the “OpenAI rival,” plans to spend tens of billions of dollars to acquire 1 million TPU chips, and Facebook’s parent company Meta is considering investing tens of billions of dollars late in 2026 or 2027 in Google TPU AI compute infrastructure—including for Meta’s massive AI data center construction—together with Amazon’s announcement that it will try using Trainium and Inferentia to develop AI large models, all reveal that as cloud giants launch the “AI compute cost revolution” and drive AI ASIC scale penetration, market concerns about Nvidia’s growth outlook are justified.

The company is reducing a relatively small number of positions in its robotics team, while at the same time raising its 2026 capital expenditures projection to around $200 billion, mainly targeting AWS’s core cloud system and massive AI workloads. Meanwhile, AWS continues advancing homegrown AI computing power such as Trainium and Inferentia, and Amazon's operations network has already deployed more than 1 million robots and uses generative AI models like DeepFleet to increase robot scheduling efficiency.

At the company’s most recent earnings call, CEO Andy Jassy confirmed that Amazon will invest about $200 billion, with spending covering all business units but focusing predominantly on Amazon Web Services (AWS cloud division), because “our compute needs are huge, customers really want AWS to host core workloads and massive AI workloads, and the more capacity we install, the faster we can monetize it at scale.”

Meanwhile, Jassy described robotics as “a major initiative” for the company. With more than one million robots in its fulfillment logistics network, automation is now handling repetitive and hazardous tasks to significantly improve productivity and efficiency.

“We will continue to optimize inventory placement to shorten shipping distances, reduce the number of touches per package, and greatly enhance package consolidation, while rolling out more advanced robotics and automation technologies to improve efficiency and enhance customer experience,” Jassy said on the earnings call.

However, just weeks after Amazon abandoned development of its multi-arm robotic lineup “Blue Jay”—which was originally set for mass deployment within Amazon's same-day delivery warehouses—the company has decided to downsize its robotics business division.

AI Compute Infrastructure: Top Priority Above All Else

Amazon’s management is now shifting capital and talent away from longer-return, more complex robotics projects to focus entirely on the AI compute infrastructure layer that promises faster returns. Amazon confirmed that this week's robotics layoffs occurred after continuing large-scale layoffs in January. At the same time, Amazon raised its 2026 capex target to $200 billion, explicitly focusing on AWS and AI compute infrastructure. On the other hand, Amazon has not abandoned its warehousing automation ambitions: it announced last year the deployment of its 1 millionth robot in its operations network and introduced the generative AI model DeepFleet to schedule robot fleets, claiming it can boost fleet efficiency by 10%. This shows the jobs being cut are likely low-marginal-return robotics projects/positions, not the “automation strategy” itself.

In other words, Amazon’s current cost plan resembles a typical tech stack reordering: first, prioritize building a universal AI platform and self-developed compute base, then feed this “cheap and scalable intelligence” back into its robotics and fulfillment network. This is not a case of “robots losing to AI,” but rather robotics becoming a downstream application layer within Amazon's AI platform strategy.

From the underlying relationship between robotics and AI data centers, Amazon seems to be recognizing a reality: the core bottleneck of the future is first the economics of compute, then end-point automation. Robots will, of course, remain important, but in Amazon’s system, they are increasingly the downstream execution layer; what really determines scalability, cost per unit, and iteration efficiency is whether the upstream can train/deploy models at lower cost and reuse these capabilities for AWS customers, Nova, Alexa, Rufus, as well as warehouse scheduling and robot control.

On Wednesday, Amazon’s stock price closed up nearly 4%, marking its best single-day performance since November, mainly thanks to a technical rebound in tech stocks amid rising market risk appetite. In addition, the U.S. services sector’s growth rate hit its fastest since mid-2022, price pressures eased, and ADP employment data exceeded expectations and rebounded, with strong economic data temporarily offsetting the macroeconomic gloom brought about by geopolitical crises in the Middle East. All three major U.S. stock indices rose, U.S. bonds and the dollar both fell, and the other risk asset—cryptocurrency—also rallied sharply.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

SS&C's Raymond James 2026 Presentation: Assessing the Margin Expansion Thesis

Moderna’s $2.25 Billion Patent Settlement: Insider Stock Sales Under the Microscope

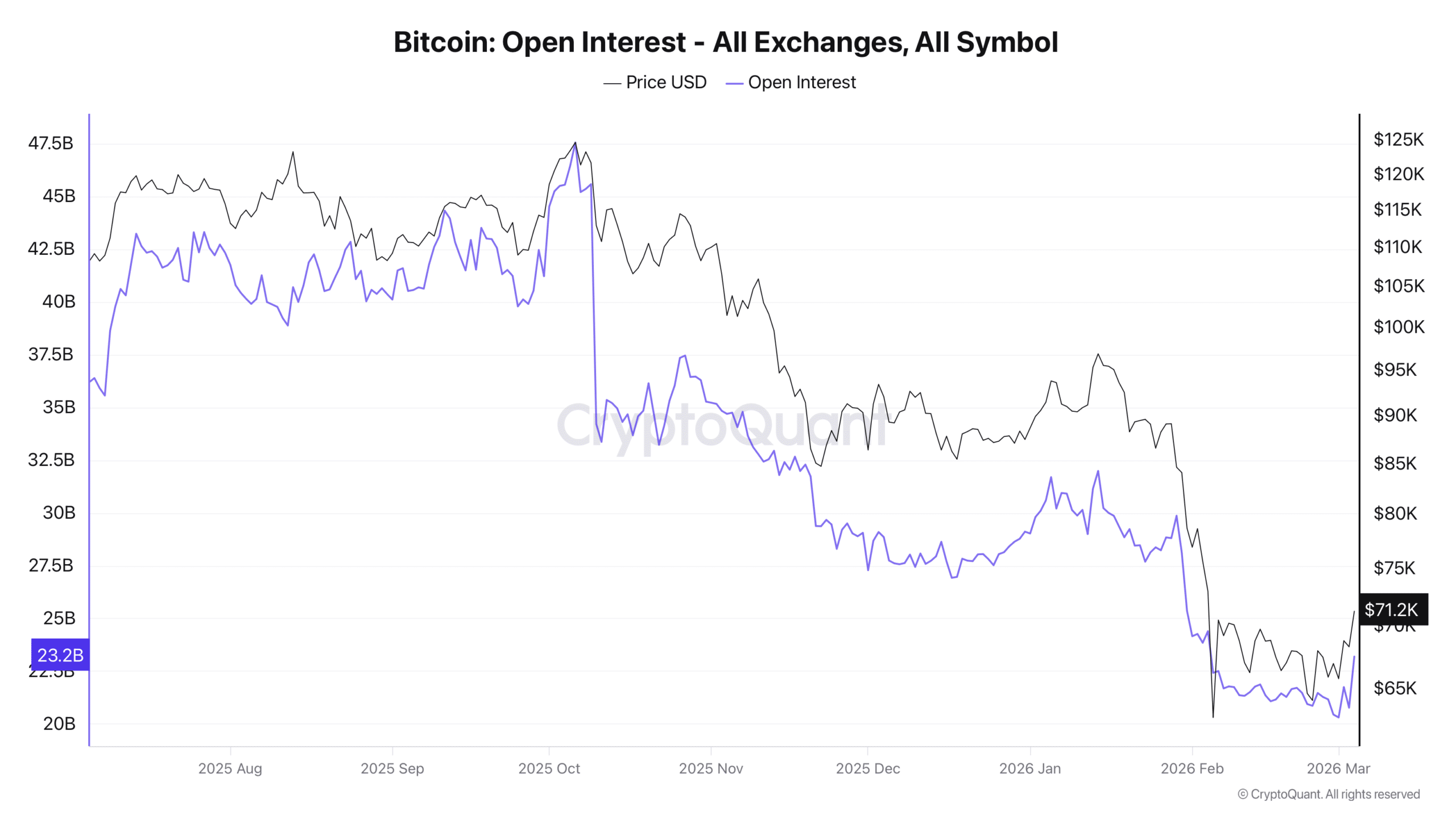

Bitcoin: Shorts still dominate BTC – But buyers are fighting back

Big Tech Joins White House Energy Pledge as Iran Tensions Threaten Higher Costs