DRAM and NAND Soar Together! Storage Chips Become the Hardest Currency in the AI Era, Micron and SanDisk Welcome the "AI Super Dividend"

According to Zhitong Finance, fueled by the unprecedented strong demand for artificial intelligence and data centers, DRAM/NAND storage prices are expected to continue soaring. As a result, the two American super storage giants—Micron Technology (MU.US) and SanDisk (SNDK.US)—once again became the focus of global investors with a robust rebound in U.S. tech stocks on Wednesday, each surging nearly 6% by the close of U.S. trading, driving storage and the Nasdaq Index to a strong rally. BNP Paribas recently released a report stating that DRAM contract prices are expected to surge by 90% quarter-on-quarter in the first quarter of 2026, while NAND, known for its traditionally stable price curve, is set to climb by 55%. Furthermore, this upward trend will likely continue in the second quarter, extending the price increase trajectory that began in the second half of 2025.

BNP Paribas is not alone in its bullish outlook on storage prices. TrendForce recently revised its forecast for calendar Q1 2026 regular DRAM contract prices from a previous quarter-over-quarter increase of 55%-60% to +90% to +95%, while NAND Flash contract prices have also been sharply revised upward to +55% to +60% QoQ. TrendForce points out that North American cloud computing providers are experiencing surging demand for enterprise SSDs (i.e., enterprise-level SSDs for data centers, or eSSD), pushing prices up another 53% to 58% QoQ in Q1. All these facts highlight a key reality: storage chips have become the “absolute focal point” in the AI super-wave, on par with Nvidia AI chips, standing out as one of the earliest supply-demand imbalances and clearest pricing bottlenecks in this cycle.

Storage price surge shows no sign of stopping; BNP Paribas bullish on continued explosive growth for Micron and SanDisk

Karl Ackerman, a senior analyst at BNP Paribas, wrote in a client note: “Our in-depth analysis of contract prices for over 50 (DRAM) SKUs and more than 75 NAND SKUs in the first calendar quarter leads us to estimate that overall DRAM average selling prices could surge by a staggering 90% QoQ in Q1, followed by another sharp rise in Q2; the core reason is the ever-increasing demand for AI servers that is creating a broader supply-demand imbalance and sustaining upward price pressure.”

“For NAND series storage products, we forecast Q1 calendar quarter prices could soar by 55% QoQ, followed by another round of sequential growth in the second quarter. This trend is mainly driven by supply-side dynamics, as NAND suppliers continue redirecting capacity towards enterprise-grade, high-performance NAND products, while new capacity expansion remains extremely cautious.”

Analyst Ackerman set a 12-month target price of up to $500 for Micron and $650 for SanDisk. As of Wednesday's U.S. close, Micron was up 5.55% at $400.77, and SanDisk gained 5.95% to $599, meaning BNP Paribas’ price targets suggest the bull run since 2025 is far from over.

Diving deeper, Ackerman from BNP Paribas points out that spot prices in February performed even stronger, and given the correlation between spot and future contract prices, the approach of contract renewals signals considerable optimism for the entire storage chip sector.

Ackerman explains, “During up cycles, DRAM and NAND spot prices often display a significant premium over contract prices. In February, market pricing indicated that consumer electronics-grade DDR4 spot prices rose 11% MoM (a stunning 1284% YoY) to $21.93/GB, while contract prices climbed 7% MoM (Nvidia up 688% YoY) to $12.17/GB, meaning spot traded at an 80% premium to contract. Similarly, consumer-grade DDR5 spot rose 9% MoM (up 673% YoY) to $19.13/GB, while contract prices rose 3% MoM (up 384% YoY) to $11.04/GB—i.e., a 73% premium for spot over contract.”

Additionally, several overseas media outlets reported Wednesday that Samsung—the largest player in the storage industry—has raised DRAM prices by more than 100%. According to Korea Electronic News, Samsung finalized Q1 DRAM supply prices with major clients such as Apple last month, with average prices for server, PC, and mobile DRAM all rising about 100% from the previous quarter—doubling since Q4 2023, and some clients/products even exceeding 100% hikes. The report, citing industry insiders, said negotiations are completed with some overseas customers having already made payments. Compared to the 70% increase negotiated in January, this marks a further jump of 30 percentage points in just one month.

The rapid price climb is reshaping global storage industry contract practices—especially as GPU/TPU system reliance on HBM, DRAM, and enterprise-grade SSDs creates persistent supply-demand imbalances. Supply negotiations have contracted from annual to quarterly terms—and now must sometimes be adjusted monthly, reflecting the severe market imbalance for storage chips.

“The Google AI computing power chain” and “Nvidia GPU chain” both depend on storage

Whether it's Google’s vast TPU AI cluster or enormous Nvidia AI GPU clusters, both require fully integrated HBM storage systems alongside AI chips. Moreover, beyond HBM, technology giants like Google and OpenAI are accelerating new and expanded AI data centers, all of which require large-scale investments in server-grade DDR5 storage and enterprise high-performance SSD/HDD solutions. Unlike Seagate and Western Digital, which focus on nearline large-capacity HDD monopolies, SanDisk specializes in high-performance eSSD, while Samsung, SK Hynix, and Micron are simultaneously dominant in multiple core fields: HBM, server DRAM (including DDR5/LPDDR5X), and high-end data center eSSD. As a result, these leaders are the direct beneficiaries of the “AI memory + storage stack,” all enjoying the massive AI infrastructure “super windfall.”

From a hardware theory perspective, AI computing is inherently limited not only by processing power but also by “data transportation capacity.” Whether Nvidia GPUs or TPU compute systems, the real determinants of large-scale model training and inference efficiency are not just the number of tensor cores/matrix units, but the bandwidth at which weights, KV cache, activations, and intermediate tensors can be delivered to compute cores each second.

From a cross-analysis of the semiconductor and AI data center infrastructure, storage chips have “perfectly positioned” themselves in the AI wave, because they benefit from both training and inference expansion, and they are the universal “toll station” across platforms, architectures, and ecosystems. As the AI era shifts from training to inference/Agents/long-context/retrieval-augmented paradigms, system demands for capacity, bandwidth, power efficiency, and persistent data layers will only intensify.

According to a Google official document, Cloud TPU is equipped with HBM (high bandwidth memory) to support larger parameter models and batch sizes. For the “inference era,” Ironwood TPU further boosts HBM capacity and bandwidth. On Nvidia's side, the Blackwell Ultra architecture allows a single GPU to fit up to 288GB HBM3e, while the GB300 NVL72 rack-scale system is designed to maximize long-context inference throughput by leveraging ultra-large HBM capacity. In other words, without HBM, GPU/TPU peak performance cannot be fully realized; it is storage bandwidth and capacity that determine whether large models can “run bigger, faster, and at full speed.”

Furthermore, the real storage systems relied upon by AI data centers have never been just HBM. The comprehensive AI storage stack is as follows: HBM provides high-speed data near the accelerator, DDR5/RDIMM/LPDRAM extend host memory and handle data preprocessing, while enterprise SSDs cover persistent data paths for training datasets, checkpoints, vector databases, RAG retrieval, and inference caches. For example, Micron officially defines AI data center storage as a “comprehensive portfolio” covering training and inference; its eSSD product line is explicitly intended to keep the AI pipeline well-fed for both training and inference stages. TrendForce also points out that as the era of AI inference arrives, North American cloud computing giants are sharply ramping up high-performance storage purchases, with eSSD demand far surpassing expectations. In short, AI GPU clusters depend on storage, and Google TPU clusters are no exception—the only difference is accelerator brand, but the underlying data storage must always be built upon a full HBM + server DRAM + NAND/SSD pyramid.

Citi analysts, in their latest storage price outlook, are even more aggressive than UBS, Nomura, and JPMorgan, advocating a “super storage cycle.” Citi’s analysts believe that, driven by the proliferation of AI Agents (i.e., agent-based AI workflows) and skyrocketing AI CPU memory requirements, storage chip prices will go out of control in 2026, raising their DRAM ASP growth forecast for 2026 from 53% to a spectacular 88%, and NAND ASP expectations from 44% to 74%. Driven by both AI training and inference needs, server DRAM ASPs are expected to surge by 144% YoY in 2026 (prior estimate: +91%). For a mainstream product such as 64GB DDR5 RDIMM, Citi projects the price will reach $620 in the first quarter of 2026, up 38% QoQ and well above the previous forecast of $518.

In the NAND sector, Citi’s forecast is similarly bold, raising 2026’s ASP growth expectation from +44% to +74%; enterprise SSD ASPs are expected to soar 87% YoY. According to Citi’s analysts, the storage chip market is entering an extremely intense seller’s market phase, with total pricing power in the hands of giants such as Samsung, SK Hynix, Micron, and SanDisk.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

SS&C's Raymond James 2026 Presentation: Assessing the Margin Expansion Thesis

Moderna’s $2.25 Billion Patent Settlement: Insider Stock Sales Under the Microscope

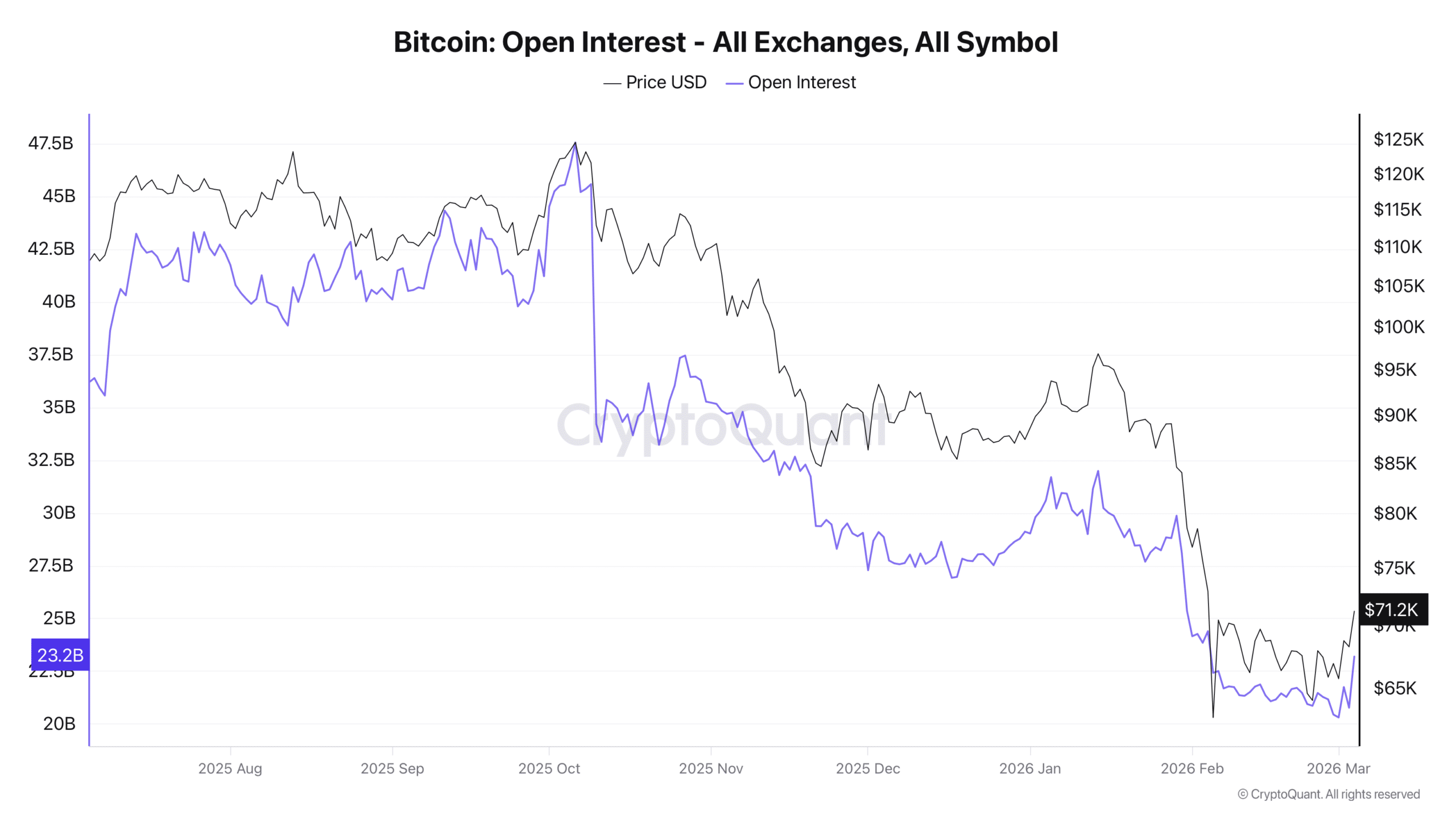

Bitcoin: Shorts still dominate BTC – But buyers are fighting back

Big Tech Joins White House Energy Pledge as Iran Tensions Threaten Higher Costs